|

Here are some resources I've collated relating to the recent WPA2 Wi-Fi vulnerability.

I would highly recommend following Mathy Vanhoef (aka @vanhoefm on Twitter) who has now been thrust in to the lime-light after being instrumental in the discovery of this vulnerability. https://tools.cisco.com/security/center/content/CiscoSecurityAdvisory/cisco-sa-20171016-wpa https://www.kb.cert.org/vuls/id/228519 https://community.ubnt.com/t5/UniFi-Updates-Blog/FIRMWARE-3-9-3-7537-for-UAP-USW-has-been-released/ba-p/2099365 http://hpe.to/60158AqFZ https://www.krackattacks.com/

Comments

For a long time I was not a huge fan of predictive planning. I put this down in most part to a lack of an appropriate tool and also the influence of an employer. Much of my early design years were spent performing AP-on-a-stick RF signal readings. The good thing about having done countless surveys of this kind is the experience gained in understanding how RF is effected by different surfaces. I know first hand how walls that look the same can attenuate the RF of Wi-Fi in weird and wonderful ways, differing from one wall to the next. Also, how some windows seems to reflect signal more than others. The factual evidence you gain builds your working knowledge of how RF propagates through different spaces - and it’s all “factual" until your colleagues laptop shows wildly different results in the same exact software.

Predictive planning was thrust upon me when it was decided (by an employer) that physical presence on site was not feasible for every design. With inadequate tools this process was difficult, if not futile. The estimated signal propagation just never felt trustworthy so a conservative signal adjustment allowed for ever so slightly better educated guesswork. I wouldn’t dare try to estimate the margin of error. From a professional standpoint we know that this simply isn’t excusable, but as long as we learn from our mistakes, right? As my design and planning work has evolved I have a huge preference for a hybrid approach. Most of the upfront planning work is done in a predictive planning tool - now i have an industry standard application - and I will always visit a site where possible to get a visual understanding for scale, construction and layout. I like to see a space and visualise areas to try and think like a client and an AP from a transmission perspective. For high AP mount positions I like to get up there and scope the coverage zone out from that point of view. This can sound pretty weird when explaining my purpose to an uninitiated person who is accompanying me on a walk-through a site. It gets even weirder when I point my arms out in varying angles to determine an appropriate directionality of an antenna. If something needs testing I’ll either rig up a generic AP for rapid testing but there is a piece of me that still yearns for an AP on a Stick test once in a while, but it’s so inconvenient. Once a deployment is done tuning can only be done with real surveyed data. Capture-test-capture and repeat if necessary. The software available today has amazing visual analysis capabilities which make channel and transmit power tuning a much more achievable task. I look forward to the day when I can open up a case and deploy multiple miniature drones which will map out environments RF characteristics both pre and post implementation. We’re basically there technologically. Someone should productise this, I’d like to work for them! Very High Density venue environments are my favourite to design for. There is a real mixture of adjacent and non-adjacent spaces to account for each with different capacities and mountable surfaces. The complexity and challenge of these designs make them an endless learning experience. I’ve worked on designs for convention and exhibition centres, sports stadiums, horse racing venues and auditoriums. My approach to designing these spaces has largely remained consistent over the years even though the deployed technology has changed drastically. I require many visits to site, hours analysing maps and floor plans and multiple nights of disturbed dream-filled nights of sleep with the occasional moments of epiphany. It is not uncommon for a solution to a complex design to be masterminded through sleep. To overcome a great deal of design challenges I must acknowledge many colleagues and mentors. Through technology I have bridged international gaps and brought experts in to the environment with me with tools such as Skype and FaceTime so they can see the spaces I need to cover and observe the obstructions we can utilise. I once found an iPad application which allowed me to record a video and narration of a rudimentary sketch of an area while I explained my trigonometry based analysis of a space and how I might cover it with particular angled directional antenna. The trials of many before me has made much of the work in this space more approachable and comprehendible. Even vendor documentation is improving to allow for better communication with customers. Validated reference designs and the like really help to educate readers (both designers and customers) but also enhance dialogue surrounding expectations. No design is truly complete without measuring the most important metric. The metric of user experience is difficult to quantify and inherently burdensome to measure. These metrics, when they are collected, should be easily correlated to performance events as monitored at an infrastructure level yet even this data, in 2017, can be hard to come by. This topic is commonly discussed in our industry's conferences and online forums and will take time and experts at all levels to solve together. Being part of this type of collaboration is the pinnacle of all the years of experience and training that we go through in our careers. It’s inspiring to meet with peers who are willing to strive openly with others to enhance outcomes. I thought I would point out a YouTube video that I think is really cool. It's on a channel I follow by Daniel Riley, a guy in middle America who flys remote piloted aircraft. The videos consist of him flying various things for entertainment (his and ours) and sharing his adventures. The FPV capability gives him a first person video feed from the aircraft itself which he views through a special headset, the quality of this video is often poor. But he also captures high quality video with onboard storage that he can then edit in to his videos. The sophistication in the videography and editing behind his videos is quite a feat that could probably go largely unacknowledged. I notice it, and it's awesome! Daniel gets to apply RF in practical ways that are so different to what I do, I really am in awe! Different frequencies from all kinds of antennas with different applications - including aircraft control, FPV video, backup links and probably others that are less obvious... This particular video is especially cool because he filmed it, mid flight, during the Total Solar Eclipse in the USA on 21st August 2017. It's funny that Daniel is so dedicated to the filming of such a great event (and, I agree, it was completely worth it) that he left his friends who were celebrating the Eclipse together in the forest to find a decent flight area. Thanks so much! I haven't yet had the pleasure and experience of being present for a total eclipse that I can remember. But this most recent one in August 2017 has brought so much great footage to the Internet that we all get to watch it at least in proxy around the world.

It isn't Wi-Fi as we know it... but it's a pretty cool use of RF. I highly recommend you check out and subscribe to rctestflight on YouTube if you liked this video and want to see more. https://www.youtube.com/user/rctestflight Risk should be the focus of IT and Network Engineers in 2017. It sounds boring or maybe like it’s "not my problem” but many of the threats facing organisations today could be mitigated by careful analysis and risk mitigation strategies. I often use Stuxnet as an example in what is ultimately a flawed analogy when talking to clients about risk. Stuxnet most probably did not reach it’s target via a network but it is the most memorable internally resident threat that I can think of. It’s threats of this type which are currently the most overwhelming risk to organisations' data and productivity. The threat acts from within the system and sometimes it’s successfully authenticated to the network. We need to think about networks and interconnectivity differently.

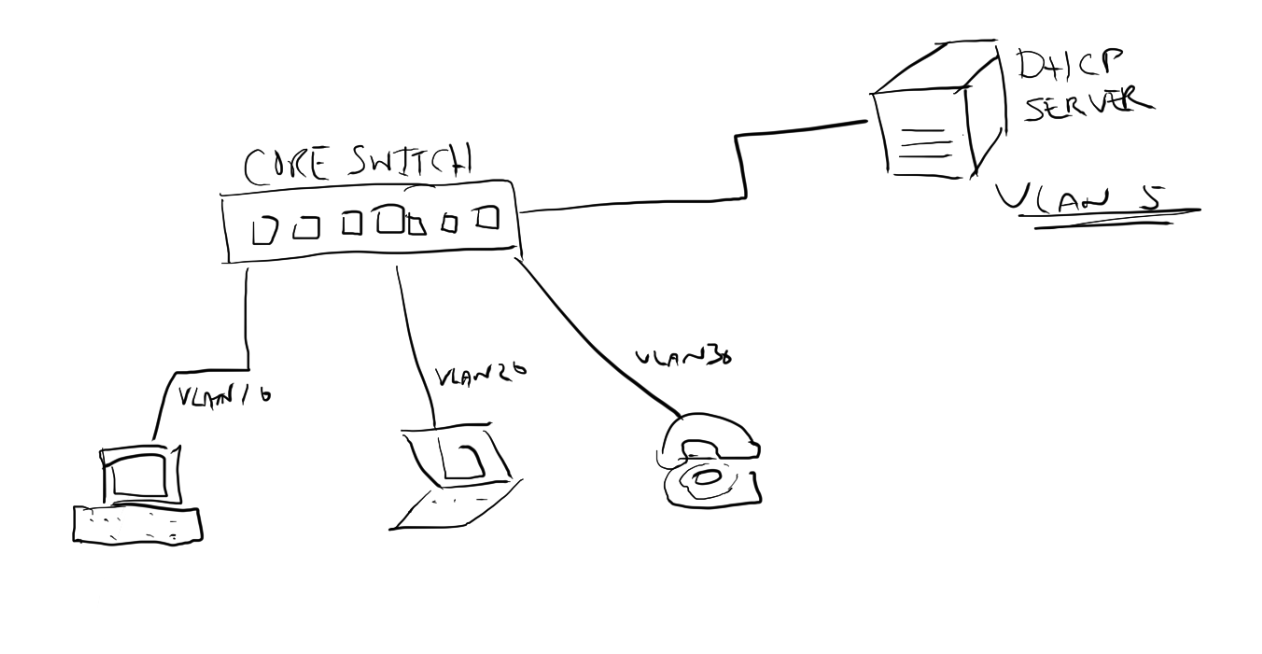

The benefits of successful risk mitigation is the reduction in downtime for users or maybe t’s zero financial loss to an organisation. Pretty compelling, right? The problem we face with computers, as we have learned over time, is that we cannot make risk equal to zero. We fight the battle with one threat and a new one appears. Software and hardware inherently holds a flaw somewhere along the way that can be compromised and there is a growing bucket of humans who are incentivised to find and take advantage of such flaws. Though we can’t win every battle it should be our duty to reduce the impact of the battles we happen to lose. Through frequent analysis of risk we can strive to better prioritise our tactics and tools for risk mitigation. Before WPA2 was available I worked my way through a Microsoft document to implement 802.1X for my wireless network. I walked through what felt like hours of steps to deploy a root CA certificate to clients, sign a certificate for a Windows IAS RADIUS and create a few rules that allowed devices to authenticate to the network. At the time it was a huge feat and a largely un-acknowledged win for security in my organisation. It was probably the best network access control of the time short of some attributes to define appropriate VLANs. When WPA2 emerged I was amazed at how simple I could convert my previous configuration to support the new standard. Back then it was all new and mostly magical, to me. Today I consult for organisations who need to enhance their network access as a basic step in risk mitigation. We make the authentication process more resilient by intelligently profiling devices as they connect to the Wi-Fi and flagging or denying devices that don’t match that profile. We enhance the authentication process so that not only does the server authenticate the client but the client mutually authenticates the server to be sure it is good. I particularly love drawing huge whiteboard diagrams of Wi-Fi based client devices and user types so we can profile who and what connects and best determine the appropriate level of access granted. For most these alone are large leaps forwards, ACLs and traffic controls are a thought for the future. In my opinion layer 2-4 traffic rules should be applied as a baseline to enhance the efficiency of Wi-Fi networks as well as reduce impact of network traversing threats. With experience and confidence in building networks with these types of controls Network Engineers will be empowered to take advantage of the next wave of risk mitigation. We are finally at the cusp of networks having a level of self awareness where real-time analysis can determine the flows of application traffic and actions can be taken to enhance or destroy the transport of the applications data. Large analyst firms are helping vendors sell new and exciting technology by building infographics showing statistics of how threats are internally born vs the “legacy” external, in-bound attacks. It’s true that we pretty much have the perimeter, Internet facing border, of our networks locked down with firewalls. It’s been that way for years. But some of that same technology that empowers our "Next Generation Firewalls” can be employed within the network because of the enhanced processing capability available today. We are seeing emerging tools which allow live analysis and risk profiling of devices and users which highlight potential internal threats as they emerge and enable action or further investigation. It’s these types of tools that will take us beyond simple authentication at the point of connection and static traffic rule-sets, to dynamic authorisation and access control. Wi-Fi networks have had deep packet inspection capability now for a few years and have allowed Network Engineers to prioritise or block network applications. This type of capability is becoming more widely available, even across the entire campus network. We will see big data collection and increasingly smart machine-learning capabilities in this space. This will allow us to make better networks that are more finely tuned to the needs of our users and critically important, will help us to reduce risk. There are a bunch of features that are built in to network switches that generally go un-used because, simply, a switch will do it’s job right out of the box. After powering up a layer 2 or 3 switch managed switch and patching in computers and other network clients connectivity is achieved. But if that is all that is done then the wrong switch was purchased to begin with. Having a managed switch implies some configuration is likely to take place. The Layer 2 or 3 (or other) specification starts to indicate how much functionality is built in. Today, some features are are typically included across the board such as Spanning Tree or DHCP Snooping. DHCP-Snooping allows your switch to keep an eye out for DHCP responses to make sure they are coming from reliable sources, or to reduce the vectors in your network where a rogue DHCP server could operate. It does this by ensuring the DHCP responses from a server come from a trusted port and trusted IP address. It’s a simple mechanism that in simple networks could be an easy risk reduction tool - helping to reduce an attack vector. Network security should be important to us all, right? So it might not be an obvious risk to everyone. Whats the risk of multiple DHCP servers? Check out http://hakipedia.com/index.php/DHCP_Rogue_Server and for more detail go here: http://seclists.org/vuln-dev/2002/Sep/99  "Rogue DHCP servers can be stopped by means of intrusion detection systems with appropriate signatures, as well as by some multilayer switches, which can be configured to drop packets." How can you find a rogue DHCP server? Here is a quick youtube video showing how Wireshark can be used as a tool to identify a rogue DHCP server. Lets take a look at a simple configuration (performed in an HPE Aruba 3810M switch) in a network where the DHCP server is connected to switch port 10 and it’s IP address is 10.0.0.10: dhcp-snooping authorised-server 10.0.0.10 dhcp-snooping trust 10 dhcp-snooping You can see we set the server IP address 10.0.0.10 as an authorised server and trust port 10. Then dhcp-snooping enables the function. If you are using a switch or other devices as an ip helper or DHCP relay then you most likely will need to make that device an authorised DHCP server also in the DHCP Snooping configuration. Take the following network diagram as an example. The DHCP server is sitting on an entirely different network to the network devices that wish to obtain an IP address. Because DHCP is designed to work via broadcast frames on a single subnet the DHCP requests would never reach the server. This is where the switch IP Helper Address comes in. The switch will receive the DHCP requests on each of the client VLANs and forward the request on to the DHCP server. If the switch itself is not defined as an authorised server the frame returning from the DHCP server will not be forwarded on to client. Lets take a look at how DHCP-snooping should be configured for this network. dhcp-snooping authorised-server 10.0.0.10 dhcp-snooping authorised-server 10.0.10.1 dhcp-snooping authorised-server 10.0.20.1 dhcp-snooping authorised-server 10.0.30.1 dhcp-snooping trust 10 dhcp-snooping vlan 10 dhcp-snooping vlan 20 dhcp-snooping vlan 30 dhcp-snooping So here we have a configuration to authorise the DHCP server IP address and each of the interfaces within the switch for each VLAN. It’s this IP interface that will respond to DHCP requests from clients and therefore must be a trusted or authorised server. Switch port 10 is trusted as that is the uplink to the DHCP server. DHCP snooping is enabled on VLANs 10, 20 and 30 and the finally DHCP snooping is enabled. Note that if you have multiple hops to the DHCP server you should trust the uplinks (all in the case of redundant links) that will eventually lead to the DHCP server on switches further down the link chain. Configuration examples shown above are on Aruba switches (3810M and 2930F) running Aruba OS-switch version 16.03. This configuration will work across other HPE Aruba switches running similar software and is most likely the same configuration for older, non Aruba branded, HPE Pro-Curve switches. Microsoft TechNet Wiki article on How to Prevent Rogue DHCP Servers on your Network calls out the DHCP Snooping capability on network equipment https://social.technet.microsoft.com/wiki/contents/articles/25660.how-to-prevent-rogue-dhcp-servers-on-your-network.aspx * While some features may be considered common, it is always best to consult a product data-sheet or specifications documentation for each switch (or any networking products) when purchasing to ensure it does cover your requirements. While some features have been adopted as an industry standard others may be proprietary or have been implemented differently on one vendors product compared to another.

|

WifiHaxWe build and optimise networks. Continuous learning is our secret to being good. Along the learning journey we will share things here... Archives

November 2022

Categories

All

|

RSS Feed

RSS Feed